Final Project

Download:

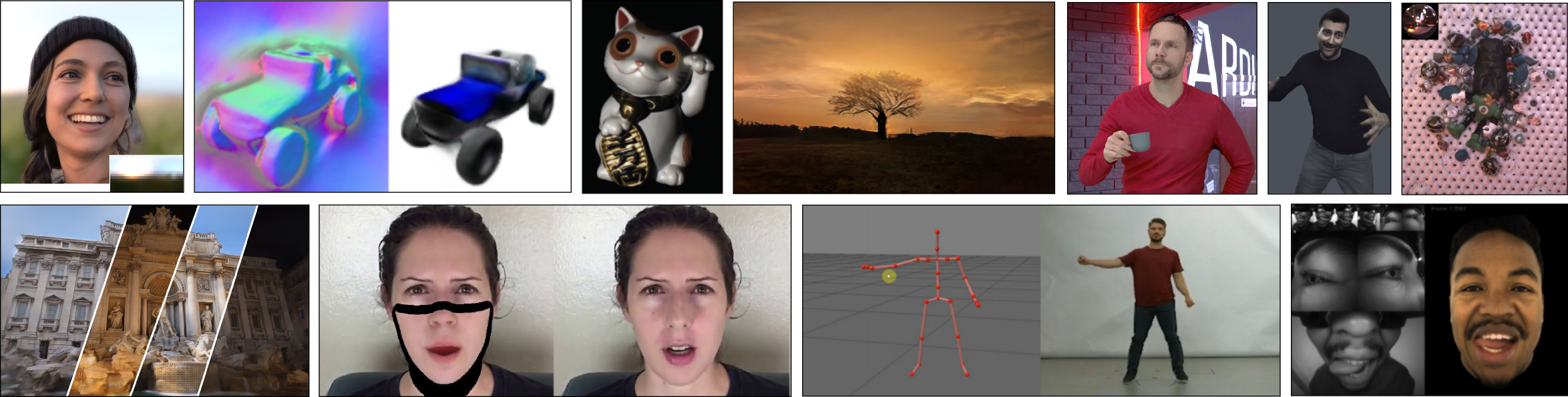

This image was taken from State of the Art on Neural Rendering by Tewari et al.

–

| Title | Authors | link |

|---|---|---|

| Generative Co-learning for Image Segmentation | Robert Skinker, Eric Youn | link |

| Concept Ablation in Diffusion Models | Anusha Kamath, Naveen Suresh, Srikumar Subramanian | link |

| Generative Models for Illumination Recovery in Low Light | Adithya Praveen, Lulu Ricketts, Shruti Nair | link |

| Text-to-Style Reconstruction for Diffusion Style Transfer | Shun Tomita | link |

| MaskGIT-Edit | Joel Ye | link |

| Supporting Cultural Representation in Text-to-Image Generation | Zhixuan Liu, Beverley-Claire Okogwu | link |

| TellUrTale | Eileen Li, Simon Seo, Yu-Hsuan Yeh | link |

| Controllable LiDAR Scene Generation with Diffusion Models | Haoxi Ran | link |

| Spatial-Temporal Domain Adaptation via Cycle-Consistent Adverserial Network | Nasrin Kalanat | link |

| Visual Model Diagnosis by Style Counterfactual Synthesis | Jinqi Luo, Yinong Wang | link |

| Contrastive Unpaired MRI Harmonization | Maxwell Reynolds | link |

| Temporally Consistent Video Retargeting without Dependence on Sequential Data | Shihao Shen, Abishek Pavani | link |

| Let’s try and pose? | Ninaad Rao, Anusha Rao, Greeshma Karanth | link |

| Ancient to Modern Photos using GANs | Akhil Eppa, Roshini Rajesh Kannan, Sanjana Moudgalya | link |

| Erase-Anything with Text Prompts | Sayali Deshpande | link |

| Language-driven Human Pose Animation | Lia Coleman | link |

| Manifold Contrastive Learning for Unpaired Image-to-image Translation | Shen Zheng, Qiyu Chen | link |

| Improving Text-to-Image Synthesis with GigaGAN and Novel Filter Bank | Zhiyi Shi, Linji Wang | link |

| GANs to Understand How the Human Brain Makes Sense of Natural Scenes | Tejas Bana | link |

| Real-Time Style Transfer for VR Experiences | Mitchell Foo | link |

| Stable Diffusion for UI Generation | Faria Huq | link |

| Latent Light Field Diffusion for 3D Generation | Ruihan Gao, Hanzhe Hu | link |

| Neural Object Relighting | George Ralph, William Giraldo | link |

| Controllable Video Generation with Stable Diffusion | Haoyang He | link |

| Multi-Modal Instruction Image Editing | Tiancheng Zhao, Chia-Chun Hsieh | link |

Congratulations to all students for their amazing works!

Introduction

Welcome to the final project for the class. The purpose is to show us something novel based on the materials we cover in the class. You can try a new modification of a method, a particularly novel application, or a close analysis of the properties of an existing method. We’ll read over your project proposals and give feedback on them early on so that we can get awesome results on cool problems! Feel free to come to our office hours to discuss the progress and challenges over the rest of the semester. We’re happy to help!

Group Policy

You can work in groups of 1-3 people. We’ll expect the standard of work to be roughly proportional to the number of members in your group. In other words, larger groups will be graded to a somewhat higher standard as far as the scale of the project attempted and the amount of work completed.

Important Dates

- 3/27: Project Proposal Due

- 4/26: Presentation Date

- 5/8: Project Code and Website Due

Note: We will not allow late days on the project.

Project Proposal

We’d like to see a couple of paragraphs describing what you want to do for your project. Be sure to describe the end output, technique, novelty, dataset usage, and action plan. Include a couple of sentences placing your project proposal in context among related works. Submit this work as a pdf file to canvas. You are encouraged to include images or your hand-drawn figures. The page limit is two pages, but one page should be more than enough.

Presentation

You’ll need to give a 5-minute presentation about your project in class. We’ll announce the time for this soon, but you should give a quick presentation that offers an overview of the method and data and shows us the cool outputs of your work! If you can’t make the time we announce, we’ll ask you to submit an equivalent video.

Code and Website Submission

You’ll need to submit (1) the code for your project to canvas and (2) a website in the project directory of your website for the course as you did for other projects. This time, we’d really want to see through the description of the method, outputs of comparison methods (if applicable), the outputs of your algorithm, any math you do, and ablations if applicable. This will be the primary deliverable, and we encourage you all to do a good job with it, as you’ll be able to show people what you’ve made in a nicely presented way.

Good luck!